Frederick Opoku Afriyie

MSc Computer Science graduate (Merit, St Mary's University London), software engineer and AI researcher with production experience shipping full-stack web systems, building ML models, and conducting original research. Currently building GestureKey — an independent AI sign language recognition project — while actively seeking engineering and research opportunities.

Building systems that work

I am a software engineer and AI researcher with an MSc in Computer Science (Merit, St Mary's University London). My work spans full-stack web development, machine learning, computer vision, and original research, applied to real products and published-level analysis.

Most recently I designed and delivered complete web systems for two commercial clients, managing the entire lifecycle: requirements, UX design, development, deployment, and ongoing maintenance. Alongside that, I have been independently building GestureKey — an AI-based sign language recognition system using computer vision — as a personal project since completing my MSc, with accessibility at its core.

I co-founded Vision’97, an independent streetwear brand shipping worldwide. I am open to engineering roles, research positions, and collaborative research opportunities.

BSc IT, Univ. of Energy & Natural Resources, Ghana

Technical capabilities

| Languages | Python JavaScript SQL Java TypeScript HTML / CSS |

| AI & ML | Machine Learning Computer Vision scikit-learn NLP & Text Analysis Random Forest Model Evaluation LLMs |

| Data & Analytics | Pandas Power BI Tableau Regression Modelling Statistical Inference EDA Data Visualisation |

| Web & Software | Full-Stack Dev REST APIs Node.js React Native MongoDB Firebase Software Architecture |

| Tools & Platforms | Git & GitHub VS Code Microsoft Azure Relational Databases Cisco / VLAN |

| Research & Design | Systematic Analysis Cross-Validation Technical Writing UI/UX Design |

Where I've worked

- Representing luxury brands including Kenzo, Hugo Boss, Timberland, and Michael Kors in a high-end retail environment

- Delivering premium customer service and managing product displays in a fast-paced upmarket setting

- Supporting daily sales operations and contributing to team performance targets

- Designed and built complete full-stack web systems for two commercial clients, a hotel and a cleaning company, from requirements through to live deployment

- Delivered customer-facing booking and enquiry platforms alongside staff management backends with receipt generation and admin dashboards (live analytics, booking history, activity logs)

- Managed the full project lifecycle: client requirements, UX design, development, deployment, domain configuration, and professional email setup

- Co-founded and continue to operate an independent streetwear brand shipping worldwide

- Lead all creative direction, graphic design, and UI/UX for the e-commerce platform, growing online presence by 40%

- Developed scalable design workflows, reducing production turnaround by 15%

- Provide technical support to over 200 community members with detailed service tracking

- Developed documentation and reports that improved service delivery efficiency by 15%

- Managed data records, coordinated documentation, and supported digital reporting and operational tracking

- Contributed to information systems management in a fast-paced professional environment

Things I've built

End-to-end full-stack system for a hotel client. Built a customer-facing booking and enquiry platform, a staff backend for managing reservations and generating receipts, and an admin dashboard with live analytics, booking history, and activity tracking. Handled full deployment, domain setup, and professional email configuration.

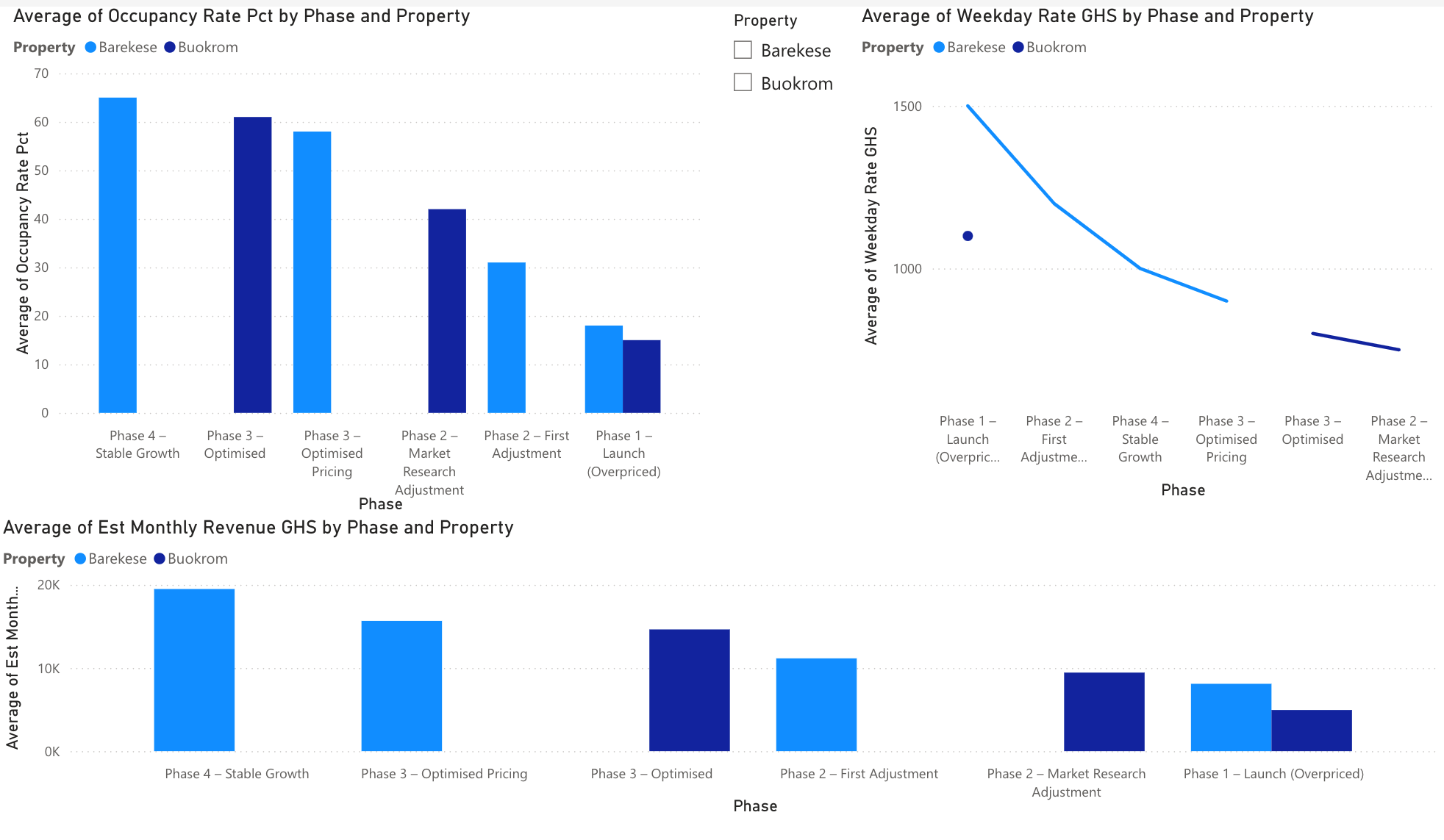

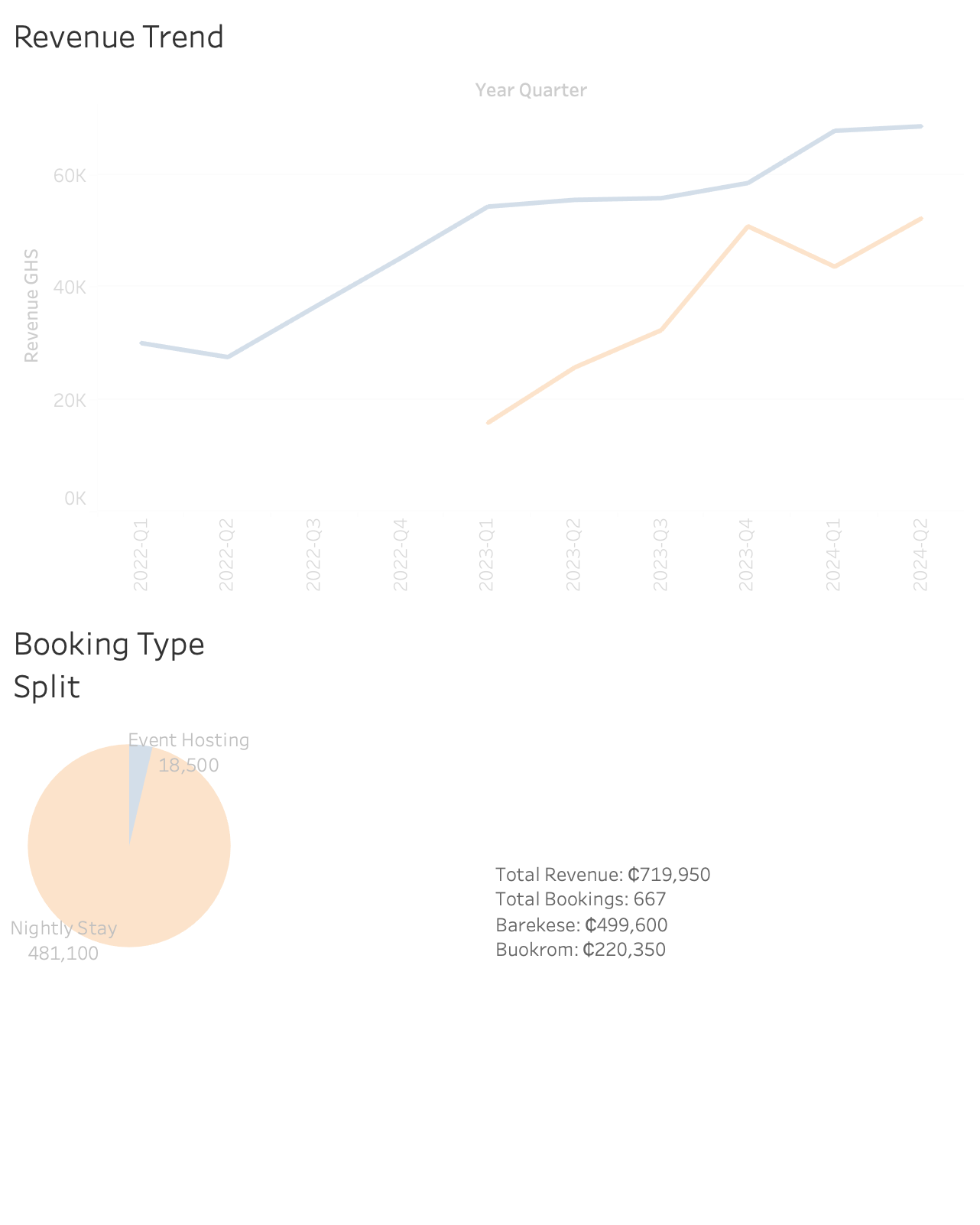

Real consulting engagement with a Ghanaian property firm — analysed booking patterns across two short-stay properties, identified overpricing as the root cause of low occupancy, and delivered a data-driven repricing strategy that generated a +290% revenue uplift. Built end-to-end: Python EDA → Excel workbook → Power BI & Tableau dashboards → live interactive Plotly Dash app deployed on Render.

Professional frontend for a cleaning and fumigation company. Built the full customer-facing site including service showcase, enquiry system, and mobile-responsive UX.

Independent research project: a computer vision system classifying sign language gestures from image datasets. Applies preprocessing pipelines, ML models, and iterative evaluation, targeting real-world accessibility impact.

End-to-end AI-powered learning platform with 8 career tracks, a built-in AI tutor, career readiness scoring, gamification rewards, and a Pomodoro productivity timer — designed to take users from zero to job-ready.

Real-world courier and package tracking web app with a live tracking timeline, multi-page site, admin dashboard, and dark mode. Built with React, Vite, and Tailwind CSS.

AI-powered tool that reads legal contracts and returns plain-English summaries, highlights risk clauses, and rewrites problematic sections — making legal documents accessible to anyone.

Official website for a UK & Ghana-based charity that provides wheelchairs, hearing aids, prosthetics, and adaptive equipment to people with disabilities across Africa. Full frontend with charity mission, impact stories, and donation pathways.

A Python + Pygame dark fantasy simulation — become a hunter, outwit the knights, collect the treasure. Three game modes, boss waves, and 25 unlockable achievements. Compiled to WebAssembly via Pygbag and playable in any browser, no install required.

Research & Scholarship

Independent research project building a machine learning system to recognise and classify sign language gestures from image datasets. The system applies image preprocessing pipelines, feature extraction, and classification models trained on labelled gesture data, iterating on model accuracy and generalisation to work toward a real-world assistive tool for the deaf and hard-of-hearing community. Research combines computer vision techniques with accessibility-focused evaluation criteria.

Systematic analysis of 47 published case studies across North American, European, and Asia-Pacific residential property markets. Applied Random Forest modelling, regression analysis, and time series analysis alongside a cross-validation framework with uncertainty quantification to assess how digital systems affect operational efficiency across diverse organisational contexts.

Academic background

Notes & thinking

When I started GestureKey, I assumed the hard part would be the model architecture. It wasn't. The hard part was the data — specifically, how inconsistent lighting, hand angle, and background noise in labelled datasets can silently destroy your model's ability to generalise. Here's what the first few months of building an accessibility-focused computer vision system taught me about the gap between benchmark accuracy and real-world performance.

Most portfolio pages say "built a full-stack system." This is what that actually meant, the data model, the API structure, how I handled the admin dashboard without a dedicated analytics service, and the decisions I'd make differently next time.

Running a cross-validation framework across 47 international case studies sounds clean on a CV. The reality involved messy data, conflicting methodologies across papers, and a lot of decisions about how to handle uncertainty quantification when your sources disagree. A reflection on the research process.

Notes from applying natural language processing to real text datasets, the difference between what LLM papers describe and what working with raw text actually requires.

Building ML systems for accessibility means your evaluation criteria are different from benchmark tasks. A short essay on why optimising for real-world use by the deaf community changes how you think about model performance.

Full posts coming soon — get in touch if you'd like to discuss any of these topics.

Let's talk

Open to full-time, part-time, and contract engineering roles. Available for an immediate start.

I'm looking for teams where I can contribute across the stack — building products, working with data, or applying ML to real problems. I am equally open to research roles, research collaborations, and PhD opportunities in AI and computer vision.

If something on this page caught your attention, reach out directly at opokufred32@gmail.com or connect on LinkedIn. References available on request.

Deployed & live

What I'm working on now

Building a computer vision system that classifies sign language gestures from image data, with the goal of creating an accessible, real-world assistive tool for the deaf and hard-of-hearing community. Combining image preprocessing, feature extraction, and ML classification, iterating toward a system that generalises reliably across diverse gesture inputs.

Co-founder and lead designer of an independent streetwear brand shipping worldwide. Continuously working on new collections, brand identity, and the e-commerce experience at vision97.vision.

Working through the Google Data Analytics Professional Certificate on Coursera — deepening skills in R, advanced statistical analysis, and structured data workflows to complement existing Python and ML expertise.

Working in public

What people say

Frederick delivered exactly what we needed, a complete web system, built and deployed from scratch, that our whole team uses every day. Professional, reliable, and genuinely understood what we were trying to achieve.

Working with Frederick was seamless from start to finish. He built us a website that genuinely represents our brand — professional, clean, and it just works. Our enquiries have gone up since we launched and our clients tell us it looks great. We couldn't be happier.

A short note from a colleague, collaborator, or anyone who has seen your work on GestureKey or your other projects — one strong sentence goes a long way.